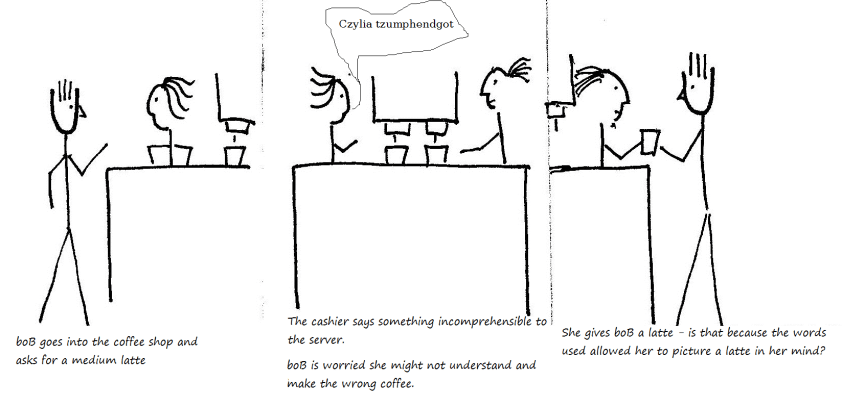

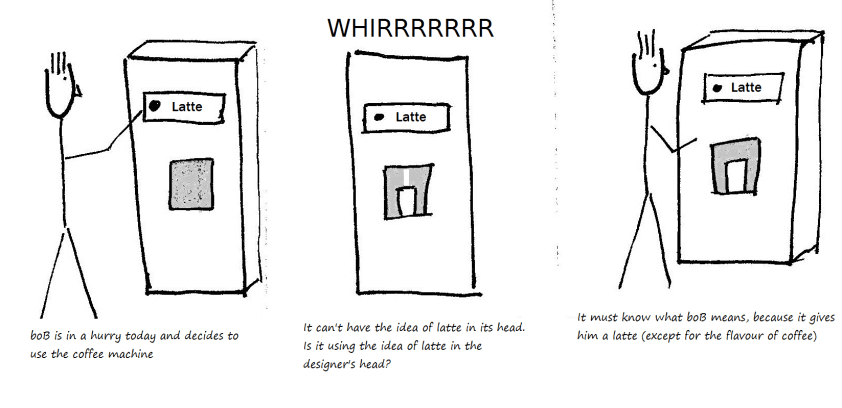

“The meaning of a term is a picture in a person’s head” might help boB talk about how he understands words, but gets him in a mess when he asks “how do machines understand words?” He can see they have “understood” him because they act in the way he expects them to act, giving him a latte not an espresso. But they have no head to have an “idea” corresponding to the meaning.

Say that a system understands what I say it it responds in a way that I expect it to. If communication fails, then we can start to ask if I have misunderstood the words I use or is it that the system is behaving badly? But whether the system includes a human or is purely mechanical, I am not going to be able to take it apart and find the “idea in the head” and see if it matches mine.